AI Fluency

Governing the consequences of human–AI collaboration — strengthening judgment, accountability, and strategic resilience in individuals and organizations.

Governing the consequences of human–AI collaboration — strengthening judgment, accountability, and strategic resilience in individuals and organizations.

Artificial intelligence is no longer a peripheral tool. It now participates directly in analysis, strategic reasoning, synthesis, decision-making, and operational execution.

This is not simple automation. It is collaboration.

Collaboration actively shapes consequences. Decisions about problem scope, embedded assumptions, accepted trade-offs, risk tolerance, and quality thresholds determine whether AI strengthens or weakens judgment over time.

Without deliberate governance, collaboration drifts toward convenience — and cognitive capability erodes gradually rather than catastrophically.

Yet, most conversations today are about prompts, selecting tools, and scaling deployments. Few focus on governing the collaboration itself.

AI Fluency exists as a focus area because ungoverned collaboration creates invisible risk — cognitive fragility masked by short-term productivity.

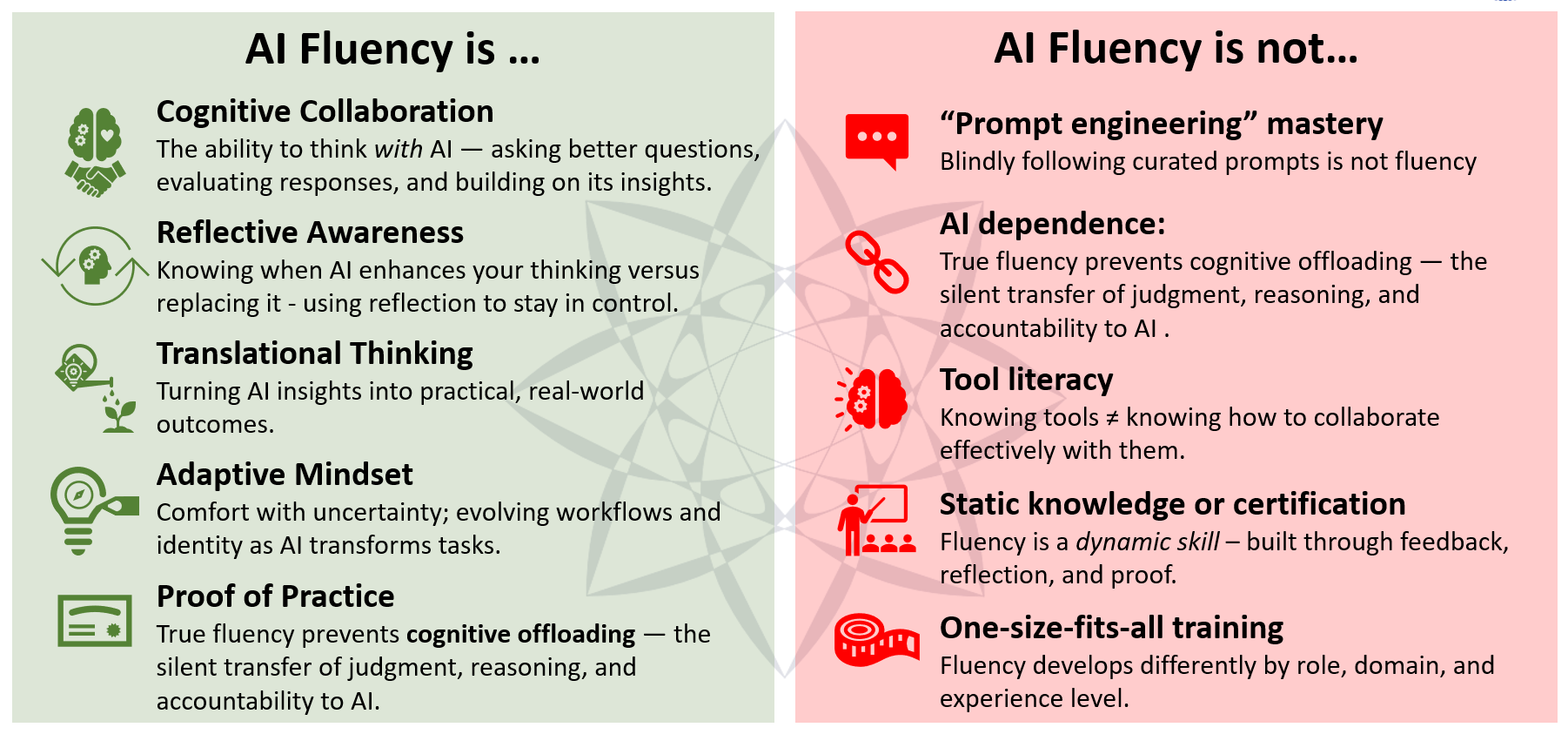

AI Fluency is the disciplined, continuously improving governance of consequences in human–AI collaboration — ensuring both valuable outcomes and strengthened human cognitive capacity.

“Consequence” includes external impact (legal, financial, ethical, strategic, reputational) and internal impact (cognitive strengthening vs. cognitive atrophy through offloading).

It is not prompt engineering. It is not tool mastery. It is the practice of leading collaboration with AI so that judgment is amplified — not quietly replaced.

AI fluency is not what you know about AI — it’s how you govern collaboration with it.

This focus area studies how those governance functions show up in real work — and how to strengthen them without creating dependency.

Coincentives Labs treats AI fluency as a measurable, governable discipline. Our work in this area is organized as a doctrine stack: what changed, what should be measured, what legacy methods miss, and how credible proof remains trustworthy over time.

The shift: outputs got cheaper, so judgment and consequence governance become the differentiator.

Read DF-001A high-level measurement approach using governance functions and evolutionary phases.

Read DF-002A critique of outdated measurement tools optimized for older technology and weaker cheating surfaces.

Read DF-003Why proof must be verifiable and evolvable, and what makes credentials trustworthy (anti-gaming, stackable growth, tamper/misuse resistance).

What is AI fluency?

AI fluency is the disciplined governance of consequences in human–AI collaboration—ensuring valuable outcomes and strengthened human judgment rather than cognitive offloading.

Why is AI fluency a focus area now?

Because AI increasingly participates in reasoning, not just execution. Without governance, collaboration drifts toward convenience and creates invisible risk—both external consequences and internal cognitive atrophy.

How does Coincentives Labs approach AI fluency?

Coincentives Labs approaches AI fluency as a governable discipline: defining what changed, what should be measured, why legacy assessments fail, and how proof remains verifiable and trustworthy over time.

What is the AI Fluency Engine?

The AI Fluency Engine is Coincentives Labs’ measurement lens for human–AI collaboration quality, organized around governance functions and evolutionary phases.

AI Fluency is not a training module. It is an organizational inquiry into how collaboration with AI is shaping judgment, accountability, and resilience.

• Are we governing consequences — or merely generating outputs?

• Are incentives aligned with reasoning quality or speed?

• Can teams articulate and defend AI-assisted decisions?

• Is AI strengthening independent thinking — or quietly weakening it?

We work with organizations that recognize AI adoption is not merely a technology shift — but a cognitive one.

Engagement typically begins with structured inquiry: mapping collaboration patterns, identifying cognitive risk points, and establishing shared norms for governed AI collaboration.