Most enterprises are racing to scale AI. Very few are designing for how AI reshapes human judgment.

Our research shows that the long-term success of enterprise AI does not depend on how fast AI is deployed, but on the quality of the AI collaborative culture being nurtured. A high-quality culture compounds human capital over time. A low-quality one degrades it — quietly, but exponentially.

The critical question is not whether AI is being adopted, but whether organizations are fostering collaborative thinking (AI cognitive augmentation) or outsourcing thinking to AI (cognitive offloading).

The key ingredient in building a high-quality AI collaboration culture is organizationally shared AI Fluency — a common capability across roles and levels. It is the most reliable indicator of true readiness for AI transformation.

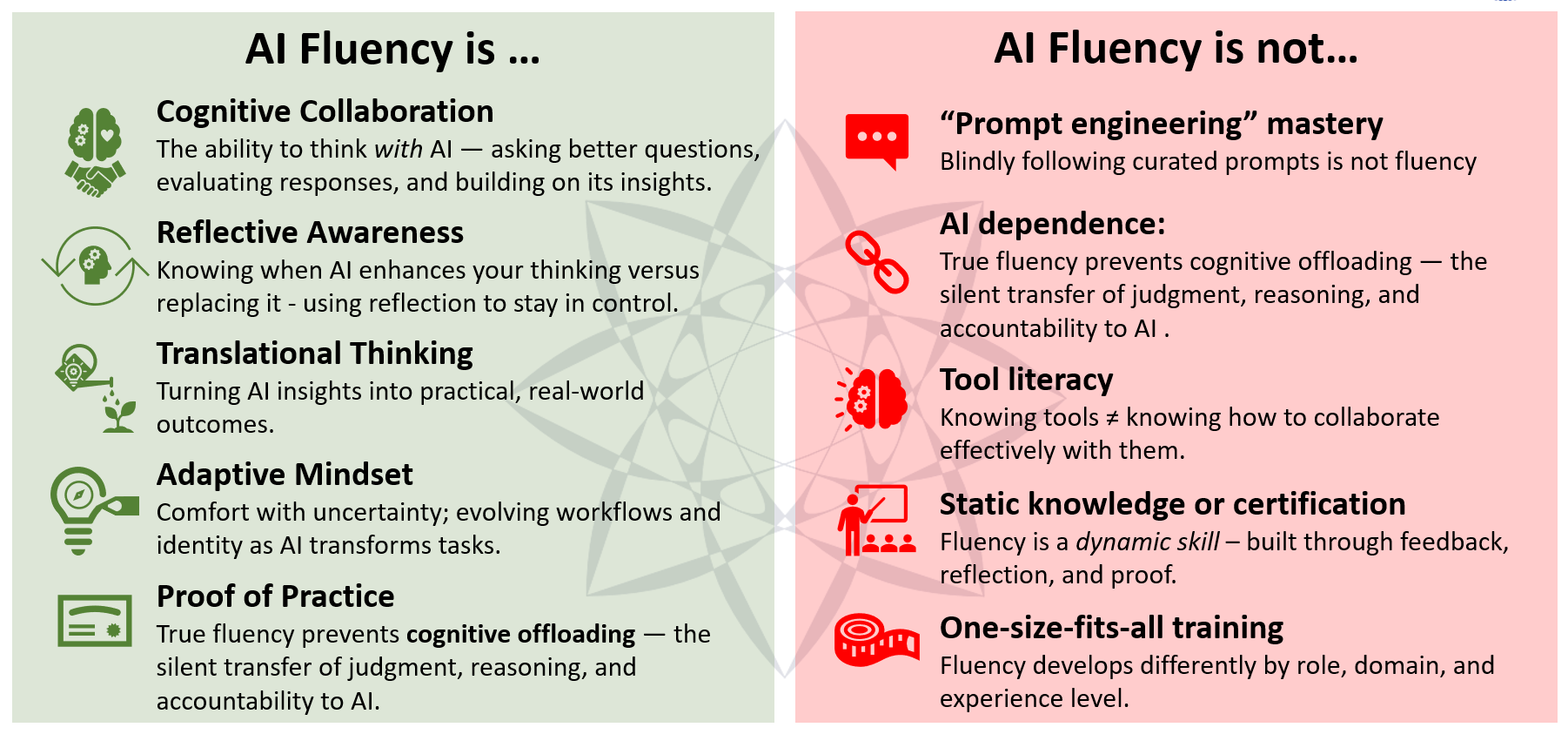

1. What Is AI Fluency (and What It Is Not)

The key ingredient in building a high-quality AI collaboration culture is organizationally shared AI Fluency — not pockets of expertise, but a common capability across roles.

AI Fluency is an organizational capability, not just an individual skill. It is the ability to achieve measurable outcomes through deliberate, first-principles thinking in structured collaboration with AI — supported by shared norms for reasoning, challenge, and accountability. In essence, it is deliberate practice of governing the consequences in human–AI collaboration.

Crucially, AI Fluency must be organizationally shared. It is not about pockets of expertise, but a common capability across roles that shapes how decisions are made with AI.

AI fluency is not what you know about AI — it’s how you think and collaborate with it.

AI Fluency ultimately determines whether human intelligence is enhanced through AI (cognitive augmentation) or quietly outsourced to it (cognitive offloading).

2. What the Lab Observes Across Enterprises

Across organizations and AI deployments, four patterns repeat.

Observation 1: AI adoption is outpacing cognitive readiness

AI tools are being deployed faster than organizations are developing capabilities and shared norms for collaborative reasoning with AI. Teams lack clear expectations around when to trust AI outputs, when to challenge them, and how decisions should be traced back to human intent. Simply put, they lack the organizational fluency required to reason with AI. As a result, cultures of low quality AI collaboration are being nurtured.

Observation 2: Asymmetric distribution of AI fluency undermines effectiveness

AI fluency is rarely evenly distributed. In some organizations, leadership is fluent but middle management is not—vision outruns execution. In others, practitioners experiment productively while leadership lacks the mental models to scale what works. In both cases, AI initiatives fragment instead of compounding, progressively degrading the quality of the collaborative culture.

Observation 3: The drift toward cognitive offloading is real

Sustained reasoning is cognitively demanding, and systems naturally drift toward paths of least resistance. In the absence of deliberate design to foster AI fluency, AI gives a compelling reason to outsource thinking and defer judgment to it. This creates a structural risk: AI becomes a cognitive crutch, and over time humans lose confidence in their own judgment — reinforcing dependency and weakening organizational resilience.

Observation 4: Incentives decide the outcome

Whether the quality of the AI collaborative culture is high or low is not determined by technology choices. It is determined by incentives — what is rewarded, measured, promoted, and ignored. If behaviour indicating AI Fluency is incentivized, the outcome is collaborative thinking with AI (cognitive augmentation) and a high quality culture that eliminates the outsourcing of thinking to AI (cognitive offloading).

3. Why Incentives Are the Primary Enablers of AI Fluency

Incentives can mean many things, Over the course of our research, we arrived at the conclusion that incentives are not limited to compensation or bonus schemes. Incentives also include...

- What gets measured

- What gets reviewed

- What gets rewarded

- What gets promoted

- And what gets ignored

Incentives are the operating system of human agency. They regulate the balance between automation and authorship, augmentation and dependency, speed and understanding.

When incentives reward speed and surface-level productivity, AI becomes a substitute for thinking. When they reward clarity of intent, quality of reasoning, and traceability of decisions, AI becomes a multiplier of judgment.

AI Fluency does not scale through training alone. It scales when organizations make thinking visible, valuable, and rewarded.

4. Human Capital Is at Risk

The long-term risk of scaling AI is not implementation failure. It is the erosion of the organization’s ability to think independently under uncertainty — the quiet degradation of human capital as reasoning is progressively offloaded to machines.

4.1 Cognitive Offloading Is a Human Capital Risk:

When humans defer judgment to AI without reflection, skills decay. Decision-making becomes thinner. Over time, organizations lose the ability to explain why choices were made.

4.2 AI Fluency Preserves and Grows Human Capital

AI Fluency keeps humans cognitively engaged. It ensures that AI contributes synthesis while humans retain responsibility for judgment.

4.3 Incentives Decide the Outcome

Where incentives reward speed alone, human capital decays. Where they reward reasoning quality and traceability, intelligence compounds.

5. Implications for Leaders

- Recast AI enablement from tool training to first-principles thinking

- Redesign KPIs to reward reasoning quality and traceability

- Prioritize protecting human capital above all

- Align incentives with growth in AI Fluency

- Audit where judgment is being outsourced — a cognitive audit, not a technology audit

Closing Thought

AI will scale whether organizations are ready or not. The question is whether human capital scales with it.

The organizations that win with AI will not be the fastest to deploy it. They will be the ones that learn to think with it — deliberately and visibly.

The deeper structural dynamics of agency, incentives, and synthetic collaboration are explored in:

CL-J26-001-FE-001 — Designing for Agency: Incentives, Fluency, and the Architecture of Human–AI CollaborationA field research essay examining how incentive design determines whether human capital compounds or decays in AI-augmented systems.